Three Indian American researchers at the University of Buffalo are developing a tool to spot AI‑generated radiology reports to combat the growing threat of falsified medical documentation and bogus insurance claims.

While not common, AI-generated medical reports by impersonating a doctor or inserting nonexistent fractures into real X-ray images, have the potential to cause serious problems in the medical and insurance industries.

To combat this growing threat, a team of UB researchers has developed what they believe is the first AI system designed to distinguish between radiology reports written by humans and those generated by AI, according to a university release.

“With generative AI becoming more capable of producing remarkably convincing radiology reports, there’s a greater risk of fabricated reports being used to falsify medical histories and support fraudulent claims,” said lead research investigator Nalini Ratha, PhD, SUNY Empire Innovation Professor in the Department of Computer Science and Engineering at UB.

“Radiology reports have highly specialized structure, vocabulary and stylistic norms, making general-purpose detectors unreliable. Therefore, our goal was to build a detection framework designed specifically for radiology that can distinguish clinician-written medical documentation from synthetic text before it reaches clinical or insurance workflows.”

Read: Indian American researchers’ tool protects against identity leaking during AI photo editing

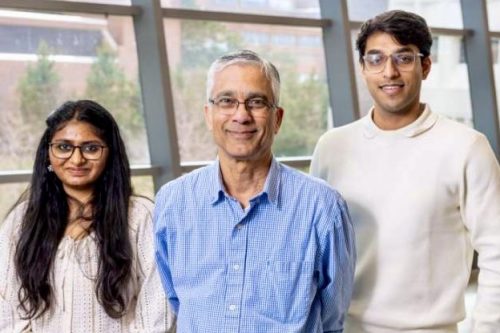

The UB team – consisting of Ratha and PhD students Arjun Ramesh Kaushik and Tanvi Ranga – presented their study, “Detecting Synthetic Radiology Reports Using Style Disentanglement,” at the 2025 GenAI4Health workshop held during the Conference on Neural Information Processing Systems in San Diego in December.

As part of their study, Ratha, Ranga and Kaushik built a dataset of 14,000 pairs of radiologist-authored and AI-generated chest X-ray reports. They used two approaches to generate the synthetic reports: paraphrasing real radiologist reports using advanced LLMs and generating full reports directly from chest radiographs using medical vision-language models (VLMs).

The dataset is the first to combine both text‑based and image‑based synthetic radiology reports, researchers say, marking a major step forward for trustworthy AI research in health care.

All samples focused solely on the findings section, which is the portion of a report that captures the radiologist’s detailed analysis and includes extensive domain-specific terminology and descriptive language.

“The findings section is both central to authorship attribution and the one most susceptible to exploitation,” Ratha said.

The next phase of the study involved developing the authorship‑detection framework built to operate on this dataset. Although LLMs can replicate clinical terminology, they struggle to mirror the stylistic characteristics of human‑authored radiology reports.

Read: Indian American students create health care guide for new immigrants

Recognizing this gap, the UB researchers created a BERT–Mamba–based detection model designed to separate each report’s stylistic features from its underlying clinical content.

The UB model distinguished human‑written reports from synthetic ones with high accuracy and consistency, achieving Matthews correlation coefficient (MCC) scores between 92% to 100% in both text-to-text and image-to-text categories. The framework also held up in cross‑LLM tests, correctly spotting AI‑generated reports from models it had never seen before.

“What we found is LLMs tend to write in polished, expansive language, while clinicians write in concise, direct terms. Radiologists use straightforward terms like ‘heart’ or ‘lung.’ LLMs often replace them with more elaborate phrases like ‘pulmonary vasculature,’ which became a clear stylistic signal that our model learned to separate,” Ranga said.

Despite the impressive results, the team is continuing to refine the dataset and benchmark detection model as they prepare for the model for public release.

The researchers also see AI systems, as they become more sophisticated and tailored to fields like radiology, as a tool that can save radiologists significant time and help them manage increasing workloads.

While his team’s research focused on radiology, Ratha says the implications of their study extend beyond health care. The same style‑based detection approach could also help safeguard industries that are increasingly vulnerable to AI‑generated forgeries, fabricated records and synthetic narratives, including insurance, finance, journalism, education and the legal profession.