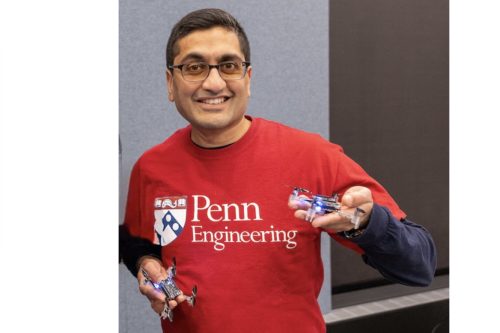

Researchers led by Rahul Mangharam, an Indian American professor at Penn’s School of Engineering and Applied Science, are investigating what happens when artificial intelligence agents leave the digital world and enter the physical world.

Led by Mangharam, professor in electrical and systems engineering and principal investigator of the Safe Autonomous Systems Lab (xLAB), they are tackling that question through a new three-year, international collaboration on Swarm AI. The project brings together three universities to study how large teams of physical AI agents can cooperate, compete, and act safely.

“Most of today’s AI agents live purely in software,” says Mangharam. “We’re moving toward physical AI, systems that don’t just generate answers, but act in the real world. And once AI operates in physical space, it has to deal with real constraints and real consequences.”

Unlike digital agents, physical agents must obey the laws of physics. They must avoid collisions, respect safety boundaries, and coordinate with teammates. The research centers on cooperation and coordination in adversarial games—scenarios in which teams of agents must strategize against opponents while maintaining internal cohesion, according to an article in Penn Today.

“A key technical focus is understanding intent,” says Mangharam. “Agents must infer what other agents, human or machine, are trying to achieve and adjust accordingly. They must coordinate without centralized control and respond to dynamic, uncertain environments. This project brings together research in machine learning for multi-agent systems that use game theory to cooperate and compete.”

Read: Indian American researcher Rahul Kanadia decodes rare genetic sequences

At large scales, this becomes an algorithmic and a systems problem: how to design distributed algorithms that scale to tens, hundreds, or even thousands of agents to make consistent, safe decisions in real time. One distinctive aspect of the project is its emphasis on neurosymbolic AI, which combines neural networks with structured, human-encoded knowledge.

“You can’t just throw AI at a problem and expect it to magically figure everything out,” says Mangharam. “There’s always human context—hard-earned domain knowledge, engineering realities, safety rules—that doesn’t live neatly in data and can’t simply be learned from scratch. If we want these systems to work in the real world, we have to teach them the fundamentals we already understand.”

“By building those physical limits, safety boundaries and operational principles directly into the system, we develop physics-informed neural networks, or PINNs, which give AI the necessary domain knowledge on how the world works, the expectations and the lines you can’t cross,” he says.

Mangharam received the 2016 US Presidential Early Career Award (PECASE) from then President Barack Obama for his work on Life-Critical Systems.

He also received the 2016 Department of Energy’s CleanTech Prize (Regional), the 2014 IEEE Benjamin Franklin Key Award, 2013 NSF CAREER Award, 2012 Intel Early Faculty Career Award and was selected by the National Academy of Engineering for the 2012 and 2017 US Frontiers of Engineering.

He has won several ACM and IEEE best paper awards in Cyber-Physical Systems, controls, machine learning, and education. Mangharam received his PhD in Electrical & Computer Engineering from Carnegie Mellon University.