OpenAI has issued a formal apology to the residents of Tumbler Ridge, a small Canadian community, following controversy over its handling of a data-related incident that raised concerns about transparency and public safety. The apology, led by CEO Sam Altman, comes after scrutiny over why the company did not promptly alert local authorities despite prior knowledge of potential risks.

The incident traces back to OpenAI’s internal awareness of unusual activity linked to its systems, which some reports suggest could have had implications for the town. Critics argue that the company recognized warning signs but failed to escalate them in time. This delay has fueled questions about how major AI firms balance internal assessment with public accountability.

In its apology letter, OpenAI acknowledged shortcomings in its response. Altman stated, “We deeply regret how we handled this situation and the concern it caused for the people of Tumbler Ridge.” The company emphasized that it has since reviewed its internal protocols and pledged to improve communication with relevant authorities.

READ: OpenAI partners with Infosys to bring AI tools to businesses (April 22, 2026)

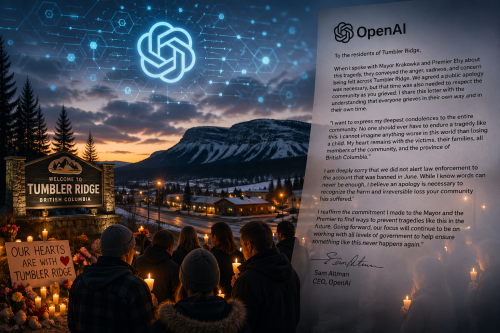

In a letter addressed to residents, Altman acknowledged both the emotional toll of the tragedy and the company’s failure to act sooner. “When I spoke with Mayor Krakowka and Premier Eby about this tragedy, they conveyed the anger, sadness, and concern being felt across Tumbler Ridge. We agreed a public apology was necessary, but that time was also needed to respect the community as you grieved,” he wrote.

Altman extended condolences to those affected, stating, “I want to express my deepest condolences to the entire community. No one should ever have to endure a tragedy like this. I cannot imagine anything worse in this world than losing a child.” He added that his thoughts remain with the victims, families, and the broader province of British Columbia.

Crucially, the letter acknowledged a lapse in protocol. “I am deeply sorry that we did not alert law enforcement to the account that was banned in June,” Altman said, recognizing the company’s failure to escalate concerns that may have been relevant to authorities.

He concluded by reaffirming OpenAI’s commitment to change. “Going forward, our focus will continue to be on working with all levels of government to help ensure something like this never happens again.”

This statement reflects growing pressure on technology companies to act swiftly when potential risks emerge. By admitting fault, OpenAI signals a shift toward greater transparency, though critics note that such acknowledgment often follows public exposure rather than proactive disclosure. The situation underscores the broader challenge of ensuring accountability in rapidly evolving AI systems.

A central point of contention remains why authorities were not alerted earlier. OpenAI explained that it initially assessed the issue as limited in scope and chose to investigate internally before taking external action. However, local officials and residents have expressed frustration, arguing that earlier notification could have mitigated uncertainty and allowed for precautionary measures.

READ: OpenAI accuses Elon Musk of ‘improper behavior’ as lawsuit nears trial (April 7, 2026)

Community reactions have been mixed. While some residents appreciate the apology, others remain skeptical about the company’s judgment. Local leaders have called for clearer guidelines on when tech companies must inform authorities, highlighting the need for stronger oversight as AI technologies expand into real-world applications.

In response to the fallout, OpenAI has committed to implementing new safeguards, including faster escalation procedures and closer coordination with local governments. The company also indicated plans to engage more directly with affected communities to rebuild trust.

The Tumbler Ridge episode serves as a cautionary tale for the tech industry. It highlights the importance of timely communication, especially when emerging technologies intersect with public welfare. As AI continues to shape everyday life, companies face increasing expectations to act responsibly, transparently, and in partnership with the communities they impact.