By Soumoshree Mukherjee

Editor’s note: This article is based on insights from a podcast series. The views expressed in the podcast reflect the speakers’ perspectives and do not necessarily represent those of this publication. Readers are encouraged to explore the full podcast for additional context.

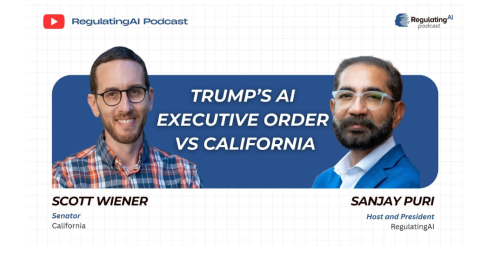

Artificial intelligence is moving faster than any technology in human history—and the people shaping its future aren’t just engineers and founders. They’re lawmakers. On a recent episode of “The RegulatingAI Podcast” hosted by Sanjay Puri, California Senator Scott Wiener offered a refreshingly grounded look at how we should govern AI without crushing innovation. His take? Regulation isn’t about slowing progress. It’s about making sure progress doesn’t blow up in our faces.

Wiener’s sense of urgency comes from lived experience. His family fled violence and repression. He lived through the HIV/AIDS crisis in San Francisco, when government inaction literally cost lives. For him, regulation isn’t abstract—it’s survival.

That’s why he looks at AI and sees both breathtaking possibility and genuine danger.

READ: AI’s ‘Goldilocks solution’: Congresswoman Sarah McBride on future guidance of AI (

On the bright side, AI could cure diseases, fight climate change, predict and prevent wildfires, and turbocharge scientific discovery. But he’s equally clear-eyed about the risks: catastrophic attacks on the power grid, bio and chemical weaponization, mass cybercrime, deepfakes, and rapid employment disruption. The tech world’s favorite line — “move fast and break things” — becomes a lot less cute when the thing that breaks is societal stability.

And historically, the U.S. hasn’t exactly been great at staying ahead of tech. Weiner said the U.S. still has no federal data privacy law, no deepfake regulations, and almost no guardrails for social media. AI can’t be another “we’ll deal with it later” moment.

If it sometimes feels like California is making rules for the entire tech world… it kind of is, Weiner notes. With the world’s fourth or fifth largest economy and deep roots in the tech ecosystem, California has repeatedly stepped in where Congress hasn’t—on auto emissions, data privacy, and now AI. Wiener walked through the state’s evolving strategy. First came SB 1047, a liability-focused bill that would have penalized companies for catastrophic failures. Industry hated it; the governor vetoed it.

But instead of packing up, Wiener pivoted. The result was SB 53, a transparency-driven bill requiring AI labs to disclose whether they’re using safety protocols for frontier models. It also introduced powerful whistleblower protections—because sometimes the people on the inside

are the only ones who can spot a looming disaster. This wasn’t a retreat; it was a strategic regroup to ensure something strong passed.

READ: Imperfect data, real impact: Inside Abhishek Mittal’s AI philosophy (

In a perfect world, AI governance would be federal. One set of rules, applied everywhere. But Wiener warns that preempting states could backfire if national laws end up weak, unenforced, or vulnerable to political swings.

And with San Francisco serving as the “beating heart” of AI innovation, California can’t afford to sit around waiting for Congress to wake up.

Here’s where Wiener gets especially candid: AI could reshape the job market faster than anything in history. Past revolutions played out over generations. AI is compressing that into years—or months. Mishandled, it could spark economic hardship and social unrest. And desperate people are easy targets for opportunistic political “grifters.”

States can’t solve this alone. Only the federal government has the economic tools—tax, spend, redistribute—to cushion the blow.

Wiener’s approach is simple: regulate where things are underregulated, pull back where they’re overregulated. Housing? Too much red tape. Technology? Not nearly enough.

It’s not ideology. It’s problem-solving.

And if AI is going to transform the world, we’ll need a lot more of that, he says.