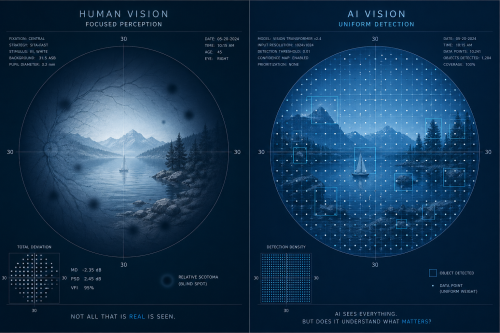

We keep asking whether AI is smarter than humans, but that is the wrong question. The more important issue is not intelligence, but what we each fail to see. The real gap between humans and AI is not how much we can think, but what we consistently miss. That is where the blind spots live, and that is where the real competition is happening.

Work from thinkers like Vivienne Ming and Jim Collins points to the same idea from different angles. Intelligence by itself does not decide outcomes. What matters is how we see the world, how that view gets disrupted, and whether we understand the limits of our own perspective. Success or failure—whether for people or AI —comes down to what gets noticed and what gets missed.

Humans always look at the world through a “frame” as Collins describes. A frame is just the mental window we use to make sense of reality. It helps us simplify complexity so we can act. The problem is that while the frame gives us clarity, it also hides everything outside it.

For example, a doctor may focus on a patient’s lab results and miss subtle symptoms in their behavior. A business leader may focus on financial metrics and miss declining team morale. In both cases, the frame creates confidence—but also blind spots.

READ: Sreedhar Potarazu | AI was built in layers—now doctors must be trained on it fast (April 22, 2026)

AI has its own kind of frame, but it works differently. It is built from training data and patterns. It can process far more information than a human, but it does not understand what is important unless it has been taught that importance. It can confuse what is common with what is meaningful.

For example, an AI model might detect a pattern that suggests a diagnosis or financial trend, but it does not “know” if that pattern actually matters in the real world. It produces answers that sound confident, even when the deeper meaning is missing. That is its blind spot.

So, the key difference is not who is more capable, but how humans and AI are blind in their own ways. Humans compress the world too much—we filter out too much and miss details. AI expands too much; it includes everything but does not prioritize meaning or context. In both cases, we trust the output, which is exactly what makes blind spots so dangerous.

These blind spots usually stay hidden until something disrupts the system. In life, that disruption is what Collins describes as a “cliff”—a moment when something breaks your normal way of operating. It could be a failure, a sudden change, or an unexpected outcome that forces you to rethink everything.

For example, losing a job may suddenly reveal that what you thought was stable was actually fragile. A medical misdiagnosis might reveal that what looked clear was incomplete. In those moments, the old frame no longer works, and you are forced to see what you were missing all along.

After a cliff comes “fog.” This is the period where nothing feels clear. The old way of thinking is gone, but a new one has not formed yet. This is often uncomfortable, and most people try to escape it quickly through snap decisions.

In today’s world, AI makes that temptation even stronger. When things are unclear, we can instantly ask ChatGPT for answers. But that speed can be misleading. It can pull us out of uncertainty too quickly, before we understand what we were missing in the first place.

For example, after a difficult decision at work, instead of sitting with uncertainty and thinking through what went wrong, we might immediately ask the AI agent for a solution. The sycophantic answer may sound right, but we may never fully understand the deeper problem.

As information becomes easier to access, attention becomes the real limitation. We are no longer limited by what we know, but by what we choose to look at. And when we rely too much on systems that give answers, we slowly lose awareness of what we are not even asking.

READ: Sreedhar Potarazu | Is AI an avatar of God? Anthropic, Mythos, and the rise of moral authority in machines (April 13, 2026)

The best human–AI systems are not the ones that remove uncertainty. They are the ones that keep it long enough to be useful. That means using AI not as the final answer, but as a tool to challenge our thinking, question assumptions, and reveal what we might be missing.

For example, instead of asking AI “What is the answer?”, a better approach is to ask “What could I be missing?” or “What is the strongest argument against this idea?” That small shift helps expose blind spots instead of hiding them.

This is where “perspective-taking” and “humility” become essential, according to Ming. Perspective-taking means being willing to look at a situation from another angle, even if it challenges your own view. Humility means accepting that your current understanding is incomplete.

For example, in medicine, a second opinion often reveals something the first doctor missed. In business, a team member with a different background may notice risks the leadership overlooked. These are not signs of weakness—they are ways of reducing blind spots.

At a deeper level, intelligence is not just about reasoning or prediction. It is about knowing the limits of your own perception and actively trying to test those limits. The most common failures—whether in humans or AI —do not come from lack of intelligence, but from unseen gaps in what we assumed was already understood.

So, the real competition with AI is not about who is smarter. It is about who is more aware of what they are not seeing.

We are not competing with AI on intelligence. The real competition is blind spots—what we miss, what we assume is obvious, and what we never think to question.

The challenge ahead is not just developing more intelligence, but learning how to see more carefully, with the humility to recognize that every frame leaves something important outside of view.