Most of the decisions we make are taken before we fully understand the problem we are trying to solve. We often navigate uncertainty without always articulating the assumptions that give shape to our judgments.

Machines, by contrast, require those assumptions to be specified, structuring both their predictions and their errors. Machine learning systems are often evaluated based on the distribution of their errors. A model may be calibrated differently depending on whether false reassurance or false alarm is judged more consequential. The system does not determine these priorities. The weighting of outcomes reflects external, and ultimately human, considerations.

The distinction between human and machine reasoning may lie less in the presence of error than in how error is interpreted and categorized. This distinction is more visible when the structure of the problem is unclear. What we can learn from this distinction is not how to eliminate error but how to better understand the structure of our own.

In the movie Zero Dark Thirty, which covers the near decade long hunt for Osama bin Laden (2001 – 2011), an exchange during the protracted search captures something rather fundamental about decision-making under uncertainty.

CIA Intelligence Officer (Dan):

“We don’t know what we don’t know.”

Islamabad CIA Station Chief Bradley:

“What the f*ck is that supposed to mean?”

Dan:

“It’s a tautology.”

READ: Sreedhar Potarazu and Carin Isabel Knoop | Heuristics or statistics in AI: How humans and machines actually decide (April 23, 2026)

The exchange garners laughter in part because it calls back the February 2002 news briefing in which then U.S. Secretary of Defense Donald Rumsfeld famously discussed “known unknowns.” To the lack of evidence for weapons of mass destruction in Iraq, he stated, “there are known unknowns; that is to say, we know there are some things we do not know.”

Both moments focus on the same underlying problem: not just missing information, but the fact that the contours of what is missing cannot be clearly delineated. The unknown thus remains impossible to observe and impossible to structure.

What follows in the film reflects a condition that is not confined to intelligence work. Fragments of information are assembled into competing interpretations, none of which can be verified before a decision must be made. The same data can be read in different ways: absence may be interpreted as concealment or as a sign that nothing is there. Even confident judgements, whether based on data or instinct, do not eliminate this uncertainty. In such settings, decisions are taken in the absence of resolution.

On the appeal of structure

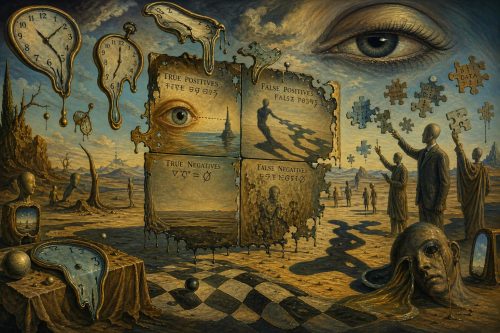

Modern analytical systems that we are all increasingly using have sought to impose greater structure upon uncertainty. One such device is the confusion matrix. Common in machine learning, this device classifies outcomes into four categories: true positives, false positives, true negatives, and false negatives. This renders error visible and allows us to differentiate among its forms. These force us to make explicit distinctions that our judgement often leaves implicit and displaces the crude binary of right and wrong, replacing it with a more differentiated account of how predictions may fail.

In real life, it can be harder to tell how stable categories are and what constitutes a “positive” or a “negative” outcome.

Let us consider the use of screening tests in medicine. A result may be classified as positive or negative, yet what constitutes a meaningful abnormality may shift over time, and the consequences of false reassurance or false alarm are rarely symmetrical. On the one hand a missed diagnosis, on the other the burden of possible diagnosis that later proves to be a false alarm. The categories appear fixed, but in practice they are subject to revision and interpretation.

READ: Sreedhar Potarazu and Carin Isabel Knoop | Opening up the AI peephole: Toward not misunderstanding each other (April 8, 2026)

The deeper lesson is that good clinical judgment requires more than experience; it requires an awareness of the limits of intuition. In glaucoma, as in all of medicine, the physician must constantly ask: What am I missing? What assumptions am I making? How likely is this pattern to occur by chance? Recognizing that we “don’t know what we don’t know” is not a weakness—it is the starting point of disciplined, evidence-based care.

Outside such structured settings, human decision-making tends to compensate in other ways.

For instance, when evaluating a new job opportunity, we may anchor on a single compelling interaction or concern, allowing it to stand in for a more complex set of uncertainties about role, culture, and long-term fit.

Compression and simplification

In domains such as clinical judgment, intelligence analysis, or organizational or personal decision-making, then, the difficulty is not just that the predictions may be mistaken, but in how correctness is defined. Faced with such ambiguity, human decision-making tends toward simplification.

The evaluative framework, however, will remain uncertain or contested. In this respect, we often find ourselves deciding what we do know because it provides us relief. A range of possible outcomes is compressed into a smaller set of categorical judgments: a course of action is deemed sound or unsound, a decision correct or in error. Such compression affords a degree of clarity and expediency, though not without cost. But the unknowns remain unknown.

A decision, such as accepting a new job, is rarely a single proposition. It is better understood as a set of uncertainties about colleagues, organizational culture, performance expectations, and the clash between what both parties (the candidate and the organization) expect and claimed about themselves to the other in order to get the original match. Each has its own likelihood and consequences in the event of failure. These elements are seldom explicitly articulated and are instead aggregated into a single judgment, whether we might like the new job or not.

A similar issue arises in legal reasoning. In administrative law, government agency decisions can be challenged as “arbitrary and capricious,” when they rely on incomplete reasoning, ignore relevant evidence, rely on flawed assumptions, or fail to consider alternative explanations—essentially when the decision-maker acts as though they understand the full picture when they do not.

In this sense, the legal standard mirrors the logic of the confusion matrix: it demands not only a conclusion but an awareness of what is not known and a disciplined effort to account for it. The question is whether different errors were considered and relevant possibilities adequately assigned.

Acting in advance of clarity

The clarity afforded by a confusion matrix is typically retrospective. It emerges once outcomes can be classified and assessed. In the circumstances depicted in Zero Dark Thirty, however, the decision must be taken in advance of such clarity. The assertion that “we don’t know what we don’t know” is less a tautology than an acknowledgment.

The more pressing question may not be how to eliminate error, but how to better apprehend the structure—or absence of structure—within which decisions are made.

One way to do this is to reflect on our own “error matrix” over time: not just whether our professional, personal, or relational decisions were right or wrong, but how we might have been wrong and why. Do we tend to overreact to weak signals, treating noise as meaningful (false positives)? Or do we underreact, missing important changes until they become undeniable (false negatives)? Do we act too quickly, or hesitate for too long?

We cannot eliminate uncertainty, but, if we think in terms of error matrices more often, we learn to become better at making our own patterns of judgment more visible and, over time, hopefully calibrating them better.

The authors thank Maxim Pike Harrell for his insights.